For the last fifty years, we have witnessed the dawn of the robotics industry. But robots have not been created with security as a concern. Security in robotics is often mistaken with safety. From industrial to consumer robots, going through professional ones, most of these machines are not prepared for cyber-threats neither resilient to security vulnerabilities. Manufacturers' concerns, as well as existing standards, focus mainly on safety. Security in robotics is not considered as a relevant matter and disregarded when compared to quality and security.

This article touches into the differences and correlation between quality, safety and security from a robotics perspective. The integration between these areas from a risk assessment perspective was studied in [1] [2] which resulted in a unified security and safety risk framework. Commonly, safety in robotics is understood as developing protective mechanisms against accidents or malfunctions, whilst security is aimed to protect systems against risks posed by malicious actors [3]. A slightly alternative view is the one that considers safety as protecting the environment from a given robot, whereas security is about protecting the robot from a given environment.

In this article the later is adopted.

safetyis understood as protecting the environment from a given robot, whereassecurityis about protecting the robot from a given environment.

A look into literature

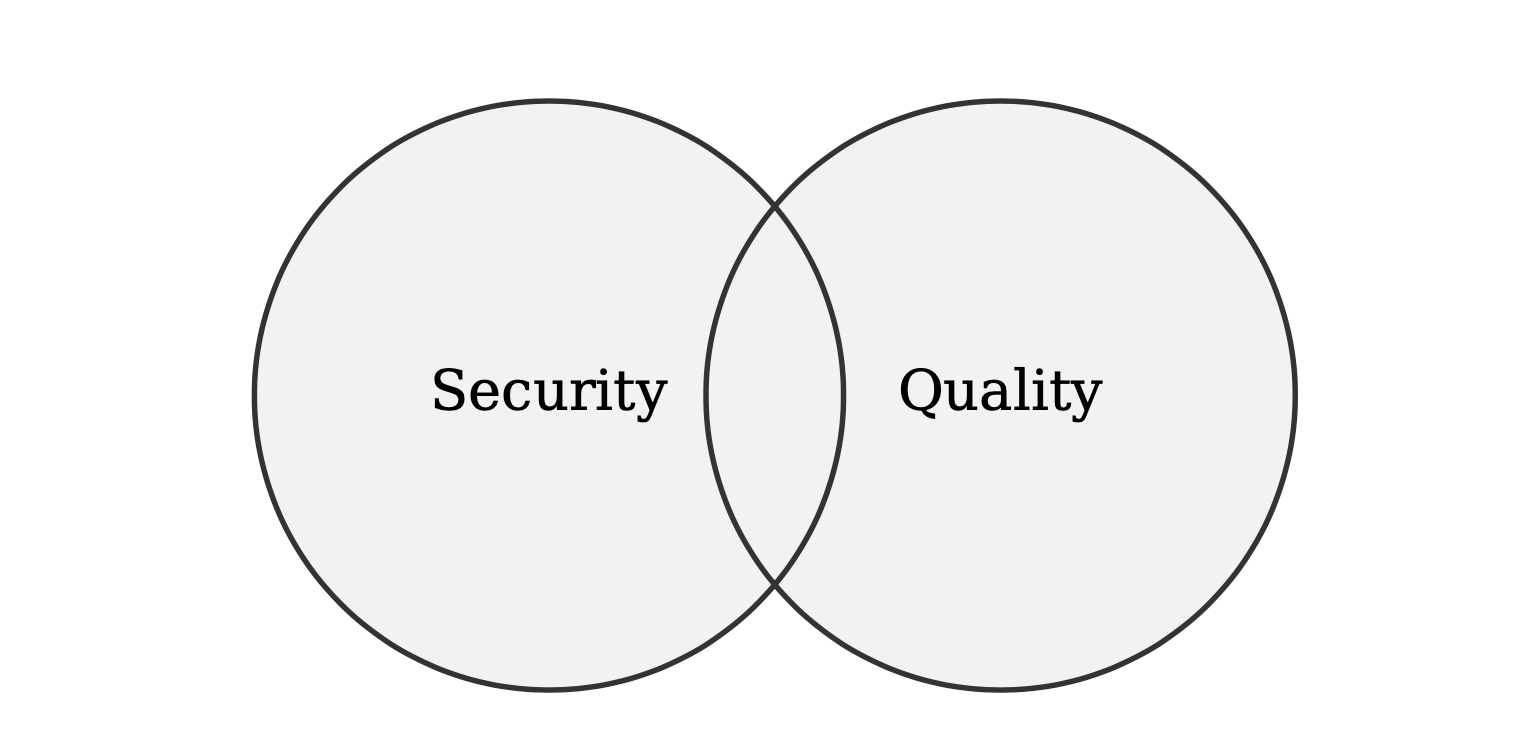

Looking for further insights, I turn into literature and see that Quality (Quality Assurance or QA for short) and Security are often misunderstood when it comes to software. Ivers argues [4] that quality "essentially means that the software will execute according to its design and purpose" while "security means that the software will not put data or computing systems at risk of unauthorized access". Within [4:1] one relevant question that arises is whether the quality problems are also security issues or vice versa. Ivers indicates that quality bugs can turn into security ones provided they're exploitable, and addresses the question by remarking that quality and security are critical components to a broader notion: software integrity as depicted in the Figure below:

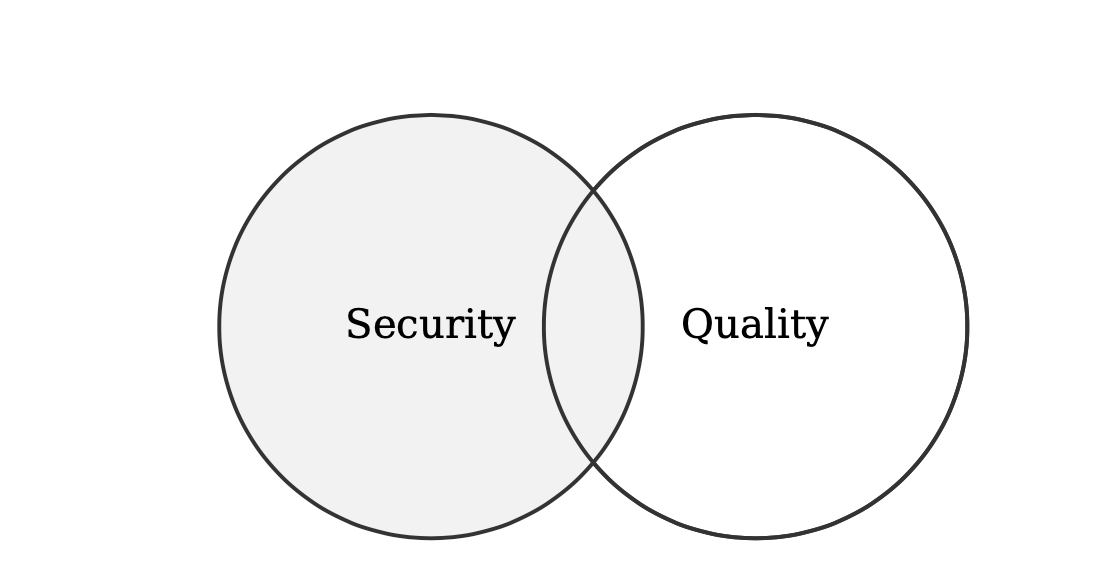

Coming from the same group, Vamosi [5] argues that "quality code may not always be secure, but secure code must always be quality code". This somehow conflicts with the previous view and leads one to think that secure software is a subset of quality. I disagree with this view and argue instead that Quality and Security remain two separate properties of software that may intersect on certain aspects (e.g. testing) as depicted in the following Figure:

Often, both secure and quality code share several requirements and mechanisms to assess them. This includes testing approaches such as static code testing, dynamic testing, fuzz testing or software component analysis (SCA) among others.

In robotics there is a clear separation between security and quality that is best understood with scenarios involving robotic software components. For example, if one was building an industrial Autonomous Mobile Robots (AMRs) or a self-driving car, often, she/he would need to comply with coding standards (e.g. MISRA [6] for developing safety-critical systems). The same system's communications, however, regardless of its compliance with coding standards, might rely on a channel that does not provide encryption or authentication and is thereby subject to eavesdropping and man-in-the-middle attacks. Security would be a strong driver in here and as remarked by Vamosi [4:2], "neither security nor quality would be mutually exclusive, there will be elements of both".

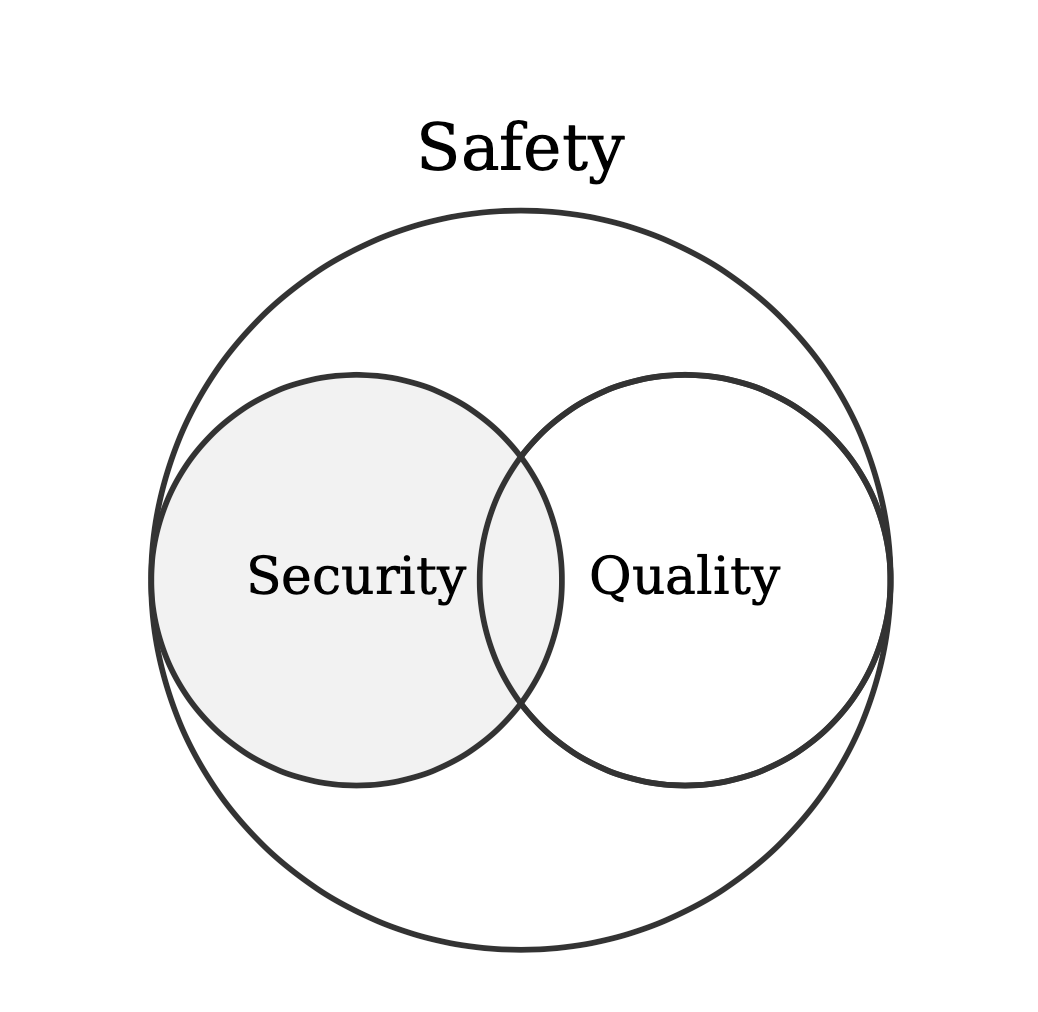

Quality in robotics, still on its early stages [7], is often viewed as a pre-condition for safety-critical systems. Similarly, as argued by several, safety can't be guaranteed without security [8], [9]. Coding standards such as MISRA C have been extended [10] [11] to become the C coding standard of choice for the automotive industry and for all industries developing embedded systems that are safety-critical and/or security-critical [9:1]. As introduced by ISO/IEC TS 17961:2013 "in practice, security-critical and safety-critical code have the same requirements". This statement is somehow supported by Goertzel [8:1], but he also emphasized the importance of software remaining dependable under extraordinary conditions and the interconnection between safety and security in software. This same argument was later extended by Bagnara [9:2] who acknowledges that having embedded systems non-isolated anymore plays a key role in the relationship between safety and security. According to Bagnara, "while safety and security are distinct concepts, when it comes to connected software" (non-isolated) "not having one implies not having the other", referring to integrity.

From my readings so far, coding standards such as MISRA or ISO/IEC TS 17961:2013 for safety-critical and security-critical software components do not guarantee that the final robotic system will be secure and thereby, safe. As illustrated in the example above, robotics involves a relevant degree of system integration and inter-connectivity (non-isolated embedded systems connected together internally and potentially, externally as well). As such, both secure and ultimately safe robotics systems do not only need to ensure quality by complying against coding standards but also guarantee that they aren't exploitable by malicious attackers.

both secure and ultimately safe robotics systems do not only need to ensure quality by complying against coding standards but also guarantee that they aren't exploitable by malicious attackers.

In the traditional view of system security, safety is often understood as "nothing bad happens naturally", while security intuitively indicates that "nothing bad happens intentionally". The following table summarizes the concepts discussed so far:

| Concept | Interpretation | Reference/s |

|---|---|---|

| Safety | Safety & Safety cares about the possible damage a robot may cause in its environment. Commonly used taxonomies define it as the union of integrity and the absence of hazards ($\textrm{Safety} = \textrm{Integrity} + \textrm{Absence of catastrophic consequences}$) | [2:1] [8:2] [9:3] |

| Security | Security aims at ensuring that the environment does not disturb the robot operation, also understood as that the robot will not put its data, actuators or computing systems at risk of unauthorized access. This is often summarized as $\textrm{Security} = \textrm{Confidentiality} + \textrm{Integrity} + \textrm{Availability}$. | [2:2] [8:3] [9:4] [4:3]} |

| Quality | Quality means that the robot's software will execute according to its design and purpose | [4:4] |

| Integrity | Integrity can be described as the absence of improper (i.e., out-of-spec) system (or data) alterations under normal and exceptional conditions | [9:5] |

Security, as understood in the Table above, shares integrity with safety. As discussed in [9:6] [8:4], "the only thing that distinguishes the role of integrity in safety and security is the notion of exceptional condition. This reflects the fact that exception conditions are perceived as accidental (safety hazards) or intentional (security threats)". The later, security threats, are always connected to vulnerabilities. A vulnerability is a mistake in software or hardware that can be directly used by an arbitrary malicious actor or actress to gain access to a system or network, operating it into an undesirable manner [12]. In robotics, security flaws such as vulnerabilities are of special relevance given the physical connection to the world that these systems imply. As discussed in [2:3], "Safety cares about the possible damage a robot may cause in its environment, whilst security aims at ensuring that the environment does not disturb the robot operation. Safety and security are connected matters. A security-first approach is now considered as a prerequisite to ensure safe operations".

In robotics, security flaws such as vulnerabilities are of special relevance given the physical connection to the world that these systems imply

Robot security vulnerabilities are potential attack points in robotic systems that can lead not only to considerable losses of data but also to safety incidents involving humans. Some claim [13] that unresolved vulnerabilities are the main cause of loss in cyber incidents. The mitigation and patching of vulnerabilities has been an active area of research [14], [15], [16], [17], [18], [19] in computer science and other technological domains. Unfortunately, even with robotics being an interdisciplinary field composed from a set of heterogeneous disciplines (including computer science), to the best of my knowledge and my literature review at the time of writing, not much vulnerability mitigation research related to robotics has been presented so far.

Where are we then?

Having researched and further understood these concepts one wonders, "where are we in robotics?"

Leaving aside military-related endeavours (which historically are a) much more well-resourced and b) have a higher consideration for these matters) I turn into current industrial and consumer robots and ask myself the following questions: can current robotic systems in these areas be considered truly safe? Is regulation currently requiring manufacturers to take security, quality and safety seriously? And, ultimately, are there good examples out there of companies caring openly and responsible about these aspects?

Let's take one at a time.

Can current robotic systems in these areas be considered truly safe?

In most cases, the answer is no. While there're some restrictions and safety considerations/norms that apply to certain domains, security is still catching up in most areas of application. Note that safety can't be guaranteed without security [8:5] [9:7]. Industry 4.0 movement together with the growth of ransomware-based business models seems to be pushing awareness however there's still a long road to go.

At the time of writing, as I review existing safety considerations and documents to obtain approvals for tests with self-driving cars in public parks, security is barely considered. While the systems engineering approach is completely valid for building functionality, one can't rely on later stages (connected to test, deployment or maintenance) to tackle security. This is the biggest mistake that's currently happening in the autonomous vehicles field. Security needs to be considered holistically and from the very beginning. Security is a process, not a singular activity. This is further covered in a recent technical report my team produced [20].

Jumping into the less hazardous robots (but more intrusive) that we reckon as current consumer-oriented robots, most of them are completely insecure and vulnerable, as shown by different studies and sources, being [21] one of the most widely distributed.

Is regulation currently requiring manufacturers to take security, quality and safety seriously?

After having read more than a dozen robotic and security standards, applied a few and even contributed to build some, I can guarantee that most robotic safety standards are not considering security. Systems complying solely with these norms will be unsafe when presented with cyber attacks. At the time of writing, some of these robotics norms are being reviewed, however the outlook isn't very promising. Security and safety are being kept separated, referring to different norms (even across standardization bodies in a non-coherent manner) and without imposing strong requirements ("must" in that jargon) whatsoever.

Provided these norms were met by industry, it would only be bad. Unfortunately, it's worse. Nations and organizations around the world make different requirements. For some, plain robot hazards due to functionality issues are present and require a funtional safety standards to be in place. Some other countries care about safety yet do not require or enforce their industries to comply with specific robotics norms (defaulting into old machinery standards).

Beyond functional safety hazards, we'll soon start seeing security-related hazards with robots. And it feels we're are far from prepared for it.

Are there good examples out there of companies caring openly and responsibly about these aspects?

Two come to mind, one for industrial robots and another for healthcare robots that present a good enough example, specially when looked at it from a security angle:

ABB: While I personally feel that ABB should improve very heavily their policies and mitigations, they've been commited to safety, quality and security for a long term already. Their business model, blackbox-based, advocates for security by obscurity, yet public security advisories (many leaving vulnerabilities still unpatched) have been presented in the past. They are one of the very early robot vendors that has matured into following a security-first approach.Intuitive Surgical: Behind the DaVinci surgical robot, my interactions with them so far (more will come in the short-term and I may have additional bits to add) has shown how much they care for quality and security.

Beyond these two, many names come to mind of companies that either totally disregard security or barely touch it. Some of this will be covered in future articles.

Fortunately, a new generation of robotic startups is appearing that (claim) care about security. From producing robot software components to complete robot systems, these new firms include security in their plans. Some of them even promise to deliver "complete" security. A bit daring in some cases, and without real backing in some others, yet a positive development. One I hope many more will follow soon.

Stoneburner, G. (2006). Toward a unified security-safety model. Computer, 39(8), 96-97. ↩︎

Kirschgens, L. A., Ugarte, I. Z., Uriarte, E. G., Rosas, A. M., & Vilches, V. M. (2018). Robot hazards: from safety to security. arXiv preprint arXiv:1806.06681. ↩︎ ↩︎ ↩︎ ↩︎

Eames, D. P., & Moffett, J. (1999, September). The integration of safety and security requirements. In International Conference on Computer Safety, Reliability, and Security (pp. 468-480). Springer, Berlin, Heidelberg. ↩︎

J. Ivers, “Security vs. quality: What’s the difference?” Mar 2017. [Online]. Available: https://www.securityweek.com/security-vs-quality-what\T1\textquoterights-difference ↩︎ ↩︎ ↩︎ ↩︎ ↩︎

R. Vamosi, “Does software quality equal software security?: Synop- sys,” Mar 2017. [Online]. Available: https://www.synopsys.com/blogs/software- security/ does-software-quality-equal-software-security/ ↩︎

Ward, D. D. (2006). MISRA standards for automotive software. ↩︎

Pichler, M., Dieber, B., & Pinzger, M. (2019, February). Can I depend on you? Mapping the dependency and quality landscape of ROS packages. In 2019 Third IEEE International Conference on Robotic Computing (IRC) (pp. 78-85). IEEE. ↩︎

Goertzel, K. M., & Feldman, L. (2009, September). Software survivability: where safety and security converge. In AIAA Infotech@ Aerospace Conference and AIAA Unmanned... Unlimited Conference (p. 1922). ↩︎ ↩︎ ↩︎ ↩︎ ↩︎ ↩︎

Bagnara, R. (2017). MISRA C, for Security's Sake!. arXiv preprint arXiv:1705.03517. ↩︎ ↩︎ ↩︎ ↩︎ ↩︎ ↩︎ ↩︎ ↩︎

MISRA, “Misra c:2012 amendment 1:“additional security guidelines for misra c: 2012,”,” HORIBA

MIRA Limited, Nuneaton, Warwickshire, UK, April, Tech. Rep., 2016. ↩︎MISRA, “Misra c:2012 addendum 2 — coverage of misra c:2012 against iso/iec ts 17961:2013 “c secure”.” HORIBA MIRA Limited, Nuneaton, Warwickshire, UK, April, Tech. Rep., 2016. ↩︎

C. P. Pfleeger and S. L. Pfleeger, Security in Computing, 3rd ed. Prentice Hall Professional Technical Reference, 2002. ↩︎

Zheng, C., Zhang, Y., Sun, Y., & Liu, Q. (2011, June). IVDA: International vulnerability database alliance. In 2011 Second Worldwide Cybersecurity Summit (WCS) (pp. 1-6). IEEE. ↩︎

Ma, L., Mandujano, S., Song, G., & Meunier, P. (2001). Sharing vulnerability information using a taxonomically-correct, web-based cooperative database. Center for Education and Research in Information Assurance and Security, Purdue University, 3. ↩︎

Alhazmi, O. H., Malaiya, Y. K., & Ray, I. (2007). Measuring, analyzing and predicting security vulnerabilities in software systems. computers & security, 26(3), 219-228. ↩︎

Shin, Y., Meneely, A., Williams, L., & Osborne, J. A. (2010). Evaluating complexity, code churn, and developer activity metrics as indicators of software vulnerabilities. IEEE transactions on software engineering, 37(6), 772-787. ↩︎

Finifter, M., Akhawe, D., and Wagner, D. (2013). An empirical study of vulnerability rewards programs. In Presented as part of the 22nd USENIX Security Symposium (USENIX Security 13), pages 273--288, Washington, D.C. USENIX. link ↩︎

McQueen, M. A., McQueen, T. A., Boyer, W. F., and Chaffin, M. R. (2009). Empirical estimates and observations of 0day vulnerabilities. In 2009 42nd Hawaii International Conference on System

Sciences, pages 1--12. link ↩︎Bilge, L. and Dumitras, T. (2012).

Before we knew it: An empirical study of zero-day attacks in the real world. In Proceedings of the 2012 ACM Conference on Computer and Communications Security, CCS '12, pages 833--844, New York, NY, USA. ACM. DOI

link ↩︎Mayoral-Vilches, V., García-Maestro, N., Towers, M., & Gil-Uriarte, E. (2020). DevSecOps in Robotics. arXiv preprint arXiv:2003.10402. ↩︎

Cerrudo, C., & Apa, L. (2017). Hacking robots before skynet. IOActive Website, 1-17. ↩︎